A Tutorial on Relational Language Design

SIGMOD tutorials 2026 (to appear)

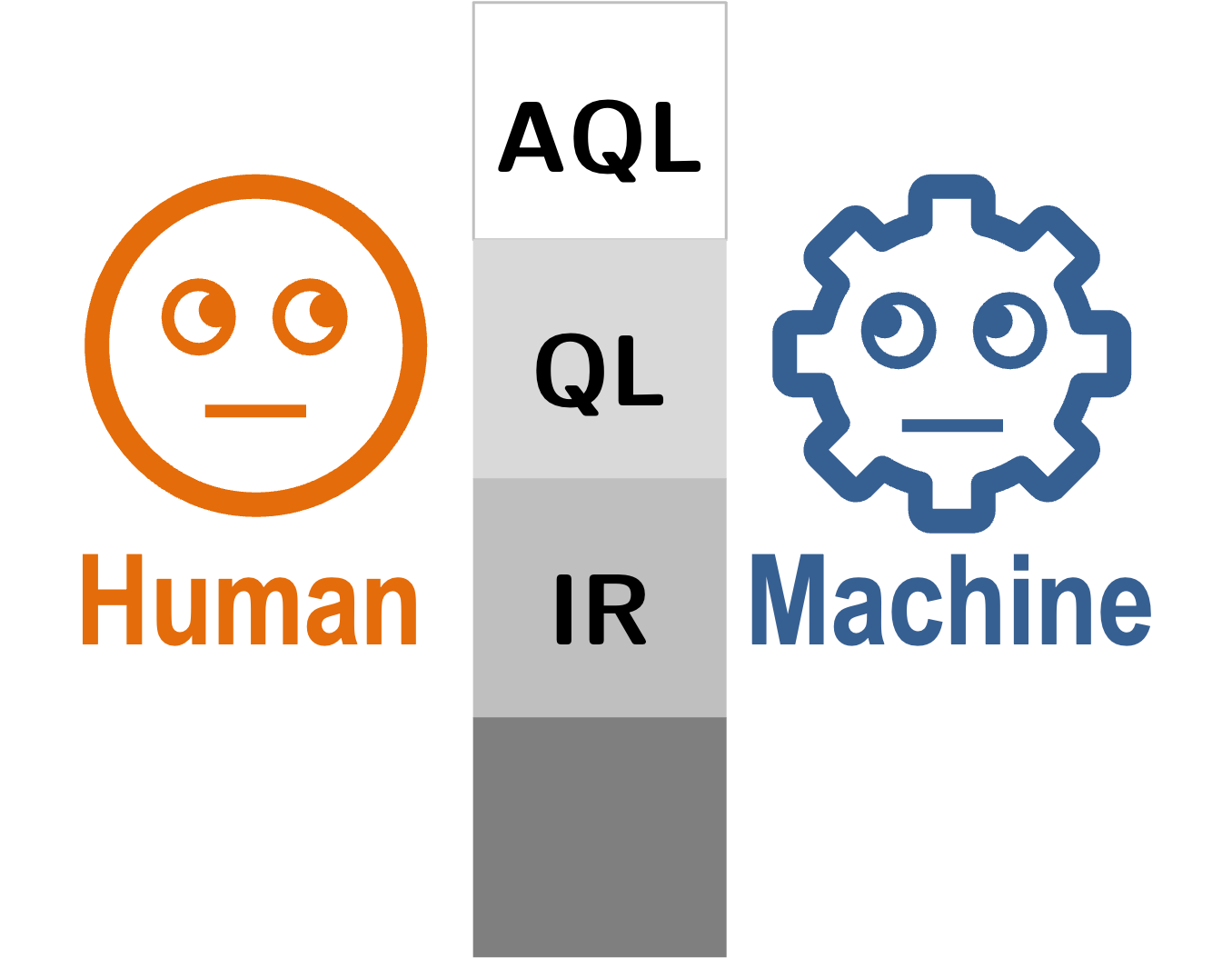

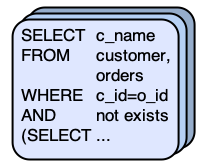

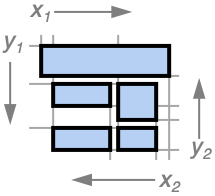

Compares SQL with classical and newer relational query languages to show how different language notations

and encoding styles shape the way people and machines write, read, and edit queries.

Relational query languages have been studied and used for more

than 50 years, with SQL overwhelmingly dominant in practice. Yet

that dominance is now being questioned from several directions

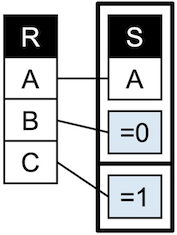

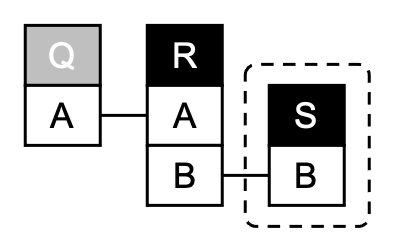

at once: higher-level abstractions such as entity-relationship and

functional data models, application languages that integrate query-

ing with application logic, algebraic intermediate representations

that blur the boundary between logical and physical specification,

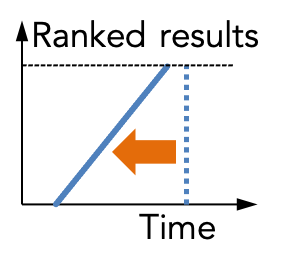

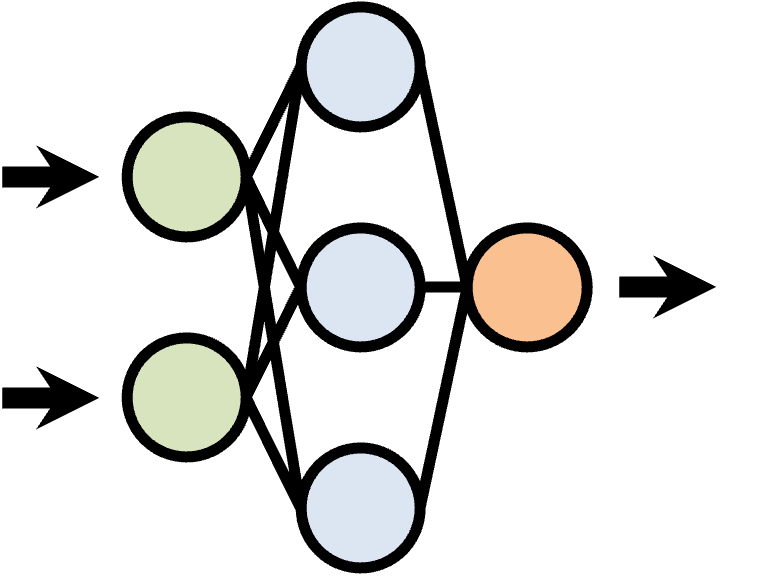

and large language models (LLMs) that both generate and explain

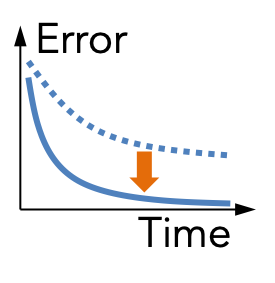

queries. These developments highlight that relational languages dif-

fer not only in expressive power, but also in what relational structure

they make explicit and in how effectively they support humans and

machines in writing, understanding, revising, and reasoning about

queries.

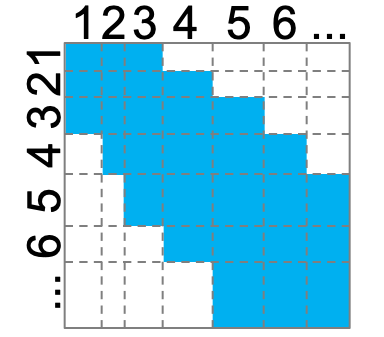

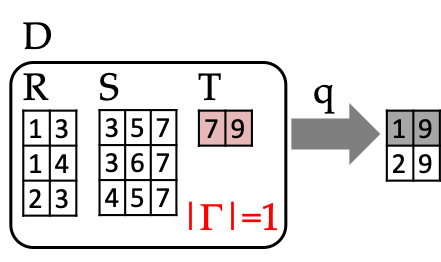

This tutorial takes this moment as an opportunity to rethink relational language design. Rather than beginning from formal def- initions, we start from a fixed set of representative queries and compare how SQL, classical alternatives, and several recent and earlier languages express the same intent. We organize this com- parison around four primary aspects that are often conflated: query intent, relational pattern, encoding style, and semantic conventions. From these examples, we derive a common vocabulary of recur- ring design dimensions and trade-offs in relational language design. Participants will leave with clearer mental models for comparing existing and proposed languages, evaluating their usability for hu- mans and AI systems, and articulating open problems in the design and evaluation of relational query languages.

The tutorial webpage is at: https://northeastern-datalab.github.i o/relational-language-tutorial.

This tutorial takes this moment as an opportunity to rethink relational language design. Rather than beginning from formal def- initions, we start from a fixed set of representative queries and compare how SQL, classical alternatives, and several recent and earlier languages express the same intent. We organize this com- parison around four primary aspects that are often conflated: query intent, relational pattern, encoding style, and semantic conventions. From these examples, we derive a common vocabulary of recur- ring design dimensions and trade-offs in relational language design. Participants will leave with clearer mental models for comparing existing and proposed languages, evaluating their usability for hu- mans and AI systems, and articulating open problems in the design and evaluation of relational query languages.

The tutorial webpage is at: https://northeastern-datalab.github.i o/relational-language-tutorial.